Machine-Proving of Entropy Inequalities

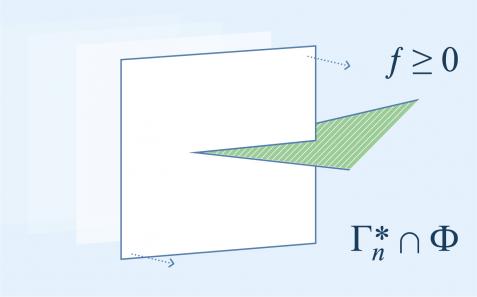

The entropy function plays a central role in information theory. Constraints on the entropy function in the form of inequalities, viz. entropy inequalities (often conditional on certain Markov conditions imposed by the problem under consideration), are indispensable tools for proving converse coding theorems. In this expository article, we give an overview of this fundamental subject. After presenting a geometrical framework for the entropy function, we explain how an entropy inequality can be formulated, with or without constraints on the entropy function. Among all entropy inequalities, Shannon-type inequalities, namely those implied by the nonnegativity of Shannon’s information measures, are best understood. The main focus of this article is the verification of Shannon-type inequalities, which in fact can be formulated as a linear programming problem. ITIP, a software package developed for this purpose, as well as two of its variants, AITIP and PSITIP, are discussed. This article ends with a discussion on the hardness of verifying entropy inequalities in general.